|

11/24/2023 0 Comments Entropy information theory

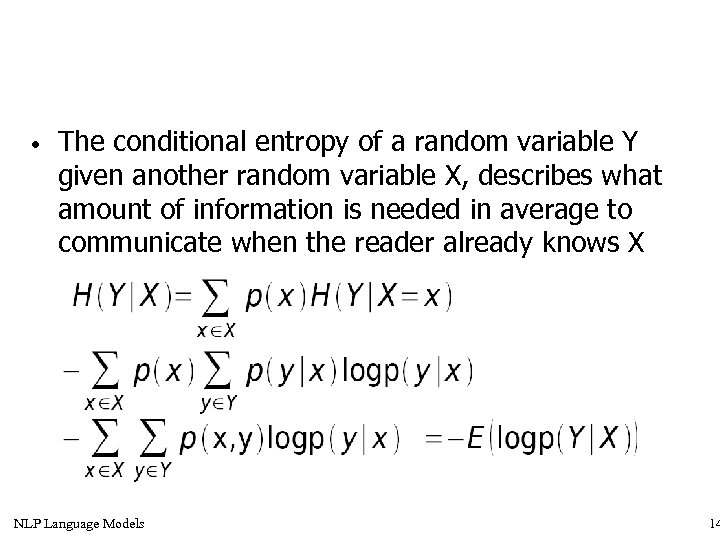

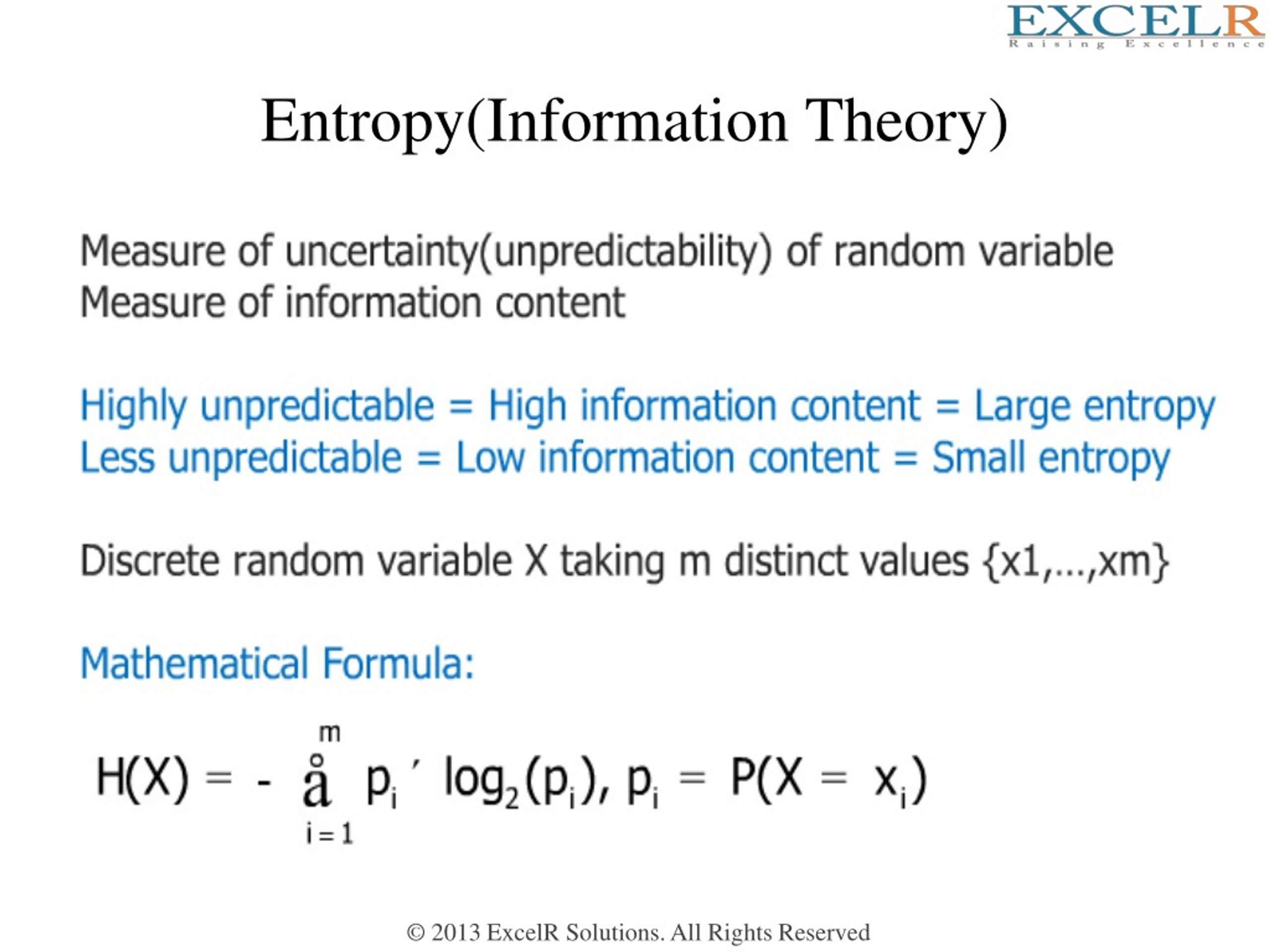

Let's apply the formula to a few facts, just for fun: Where ΔS is the reduction in entropy, measured in bits, 2 and Pr(X=x) is simply the probability that the fact would be true of a random person. When we learn a new fact about a person, that fact reduces the entropy of their identity by a certain amount. 1īecause there are around 7 billion humans on the planet, the identity of a random, unknown person contains just under 33 bits of entropy (two to the power of 33 is 8 billion). Adding one more bit of entropy doubles the number of possibilities. Intuitively you can think of entropy being generalization of the number of different possibilities there are for a random variable: if there are two possibilities, there is 1 bit of entropy if there are four possibilities, there are 2 bits of entropy, etc. That quantity is called entropy, and it's often measured in bits. There is a mathematical quantity which allows us to measure how close a fact comes to revealing somebody's identity uniquely. But it turns out that if I know these three things about a person, I could probably deduce their identity! Each of the facts is partially identifying. If all I know is their gender, I don't know who they are. If all I know is their date of birth, I don't know who they are. If all I know about a person is their ZIP code, I don't know who they are. However, knowledge that a particular number will win a lottery has high informational value because it communicates the outcome of a very low probability event.If we ask whether a fact about a person identifies that person, it turns out that the answer isn't simply yes or no. For instance, the knowledge that some particular number will not be the winning number of a lottery provides very little information, because any particular chosen number will almost certainly not win.

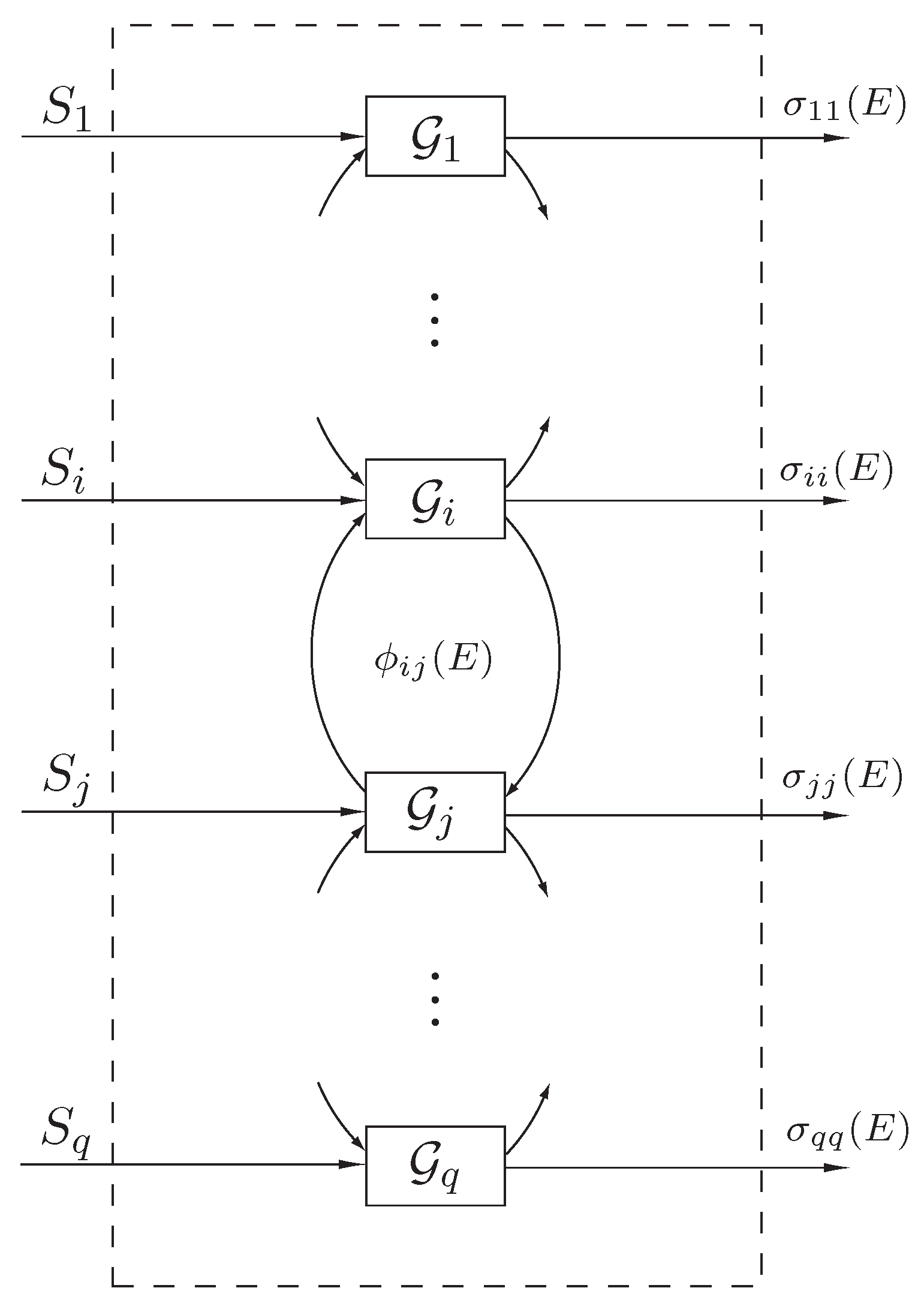

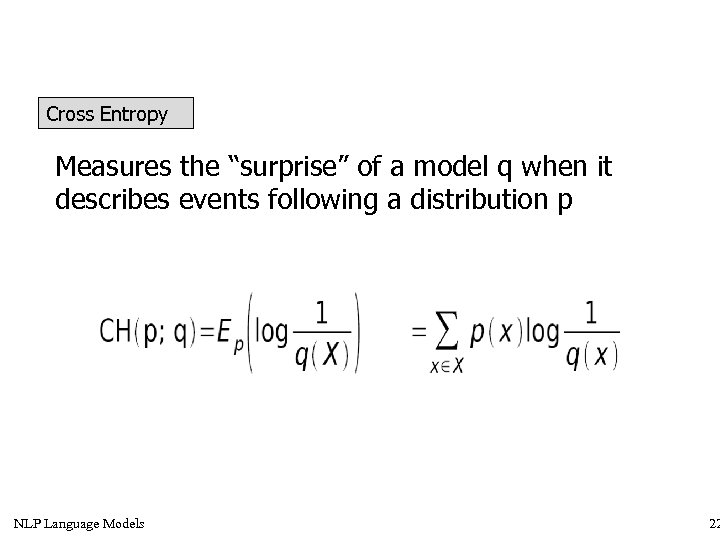

On the other hand, if a highly unlikely event occurs, the message is much more informative. If a highly likely event occurs, the message carries very little information. The core idea of information theory is that the "informational value" of a communicated message depends on the degree to which the content of the message is surprising. 9.2 Approximation to binomial coefficient.8.2 Limiting density of discrete points.8 Entropy for continuous random variables.6.5 Limitations of entropy in cryptography.6.1 Relationship to thermodynamic entropy.4.2 Alternative characterization via additivity and subadditivity.For a continuous random variable, differential entropy is analogous to entropy. The definition can be derived from a set of axioms establishing that entropy should be a measure of how informative the average outcome of a variable is. Entropy has relevance to other areas of mathematics such as combinatorics and machine learning. The analogy results when the values of the random variable designate energies of microstates, so Gibbs formula for the entropy is formally identical to Shannon's formula. Shannon strengthened this result considerably for noisy channels in his noisy-channel coding theorem.Įntropy in information theory is directly analogous to the entropy in statistical thermodynamics. Shannon considered various ways to encode, compress, and transmit messages from a data source, and proved in his famous source coding theorem that the entropy represents an absolute mathematical limit on how well data from the source can be losslessly compressed onto a perfectly noiseless channel. The "fundamental problem of communication" – as expressed by Shannon – is for the receiver to be able to identify what data was generated by the source, based on the signal it receives through the channel. Shannon's theory defines a data communication system composed of three elements: a source of data, a communication channel, and a receiver.

The concept of information entropy was introduced by Claude Shannon in his 1948 paper " A Mathematical Theory of Communication", and is also referred to as Shannon entropy. Generally, information entropy is the average amount of information conveyed by an event, when considering all possible outcomes. Two bits of entropy: In the case of two fair coin tosses, the information entropy in bits is the base-2 logarithm of the number of possible outcomes with two coins there are four possible outcomes, and two bits of entropy.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed